Our Faculty

The AI Evaluation Programme brings together leading researchers and practitioners from institutions around the world, who decided this work was important enough to show up for, who are building the methods as they teach them.

They represent a broad range of disciplines — from machine learning and statistics to governance, cognitive science, and policy — reflecting the inherently interdisciplinary nature of AI evaluation as a field.

We are lucky to have them share what they know. Here they are.

Adam Gleave

FAR.AI

Co-founder and CEO of FAR.AI, Dr. Adam Gleave holds a PhD from UC Berkeley, advised by Stuart Russell. His research focuses on value learning, robust reinforcement learning, alignment and the safe deployment of advanced artificial intelligence. He is recognized for his expertise in developing reliable and ethical AI systems.

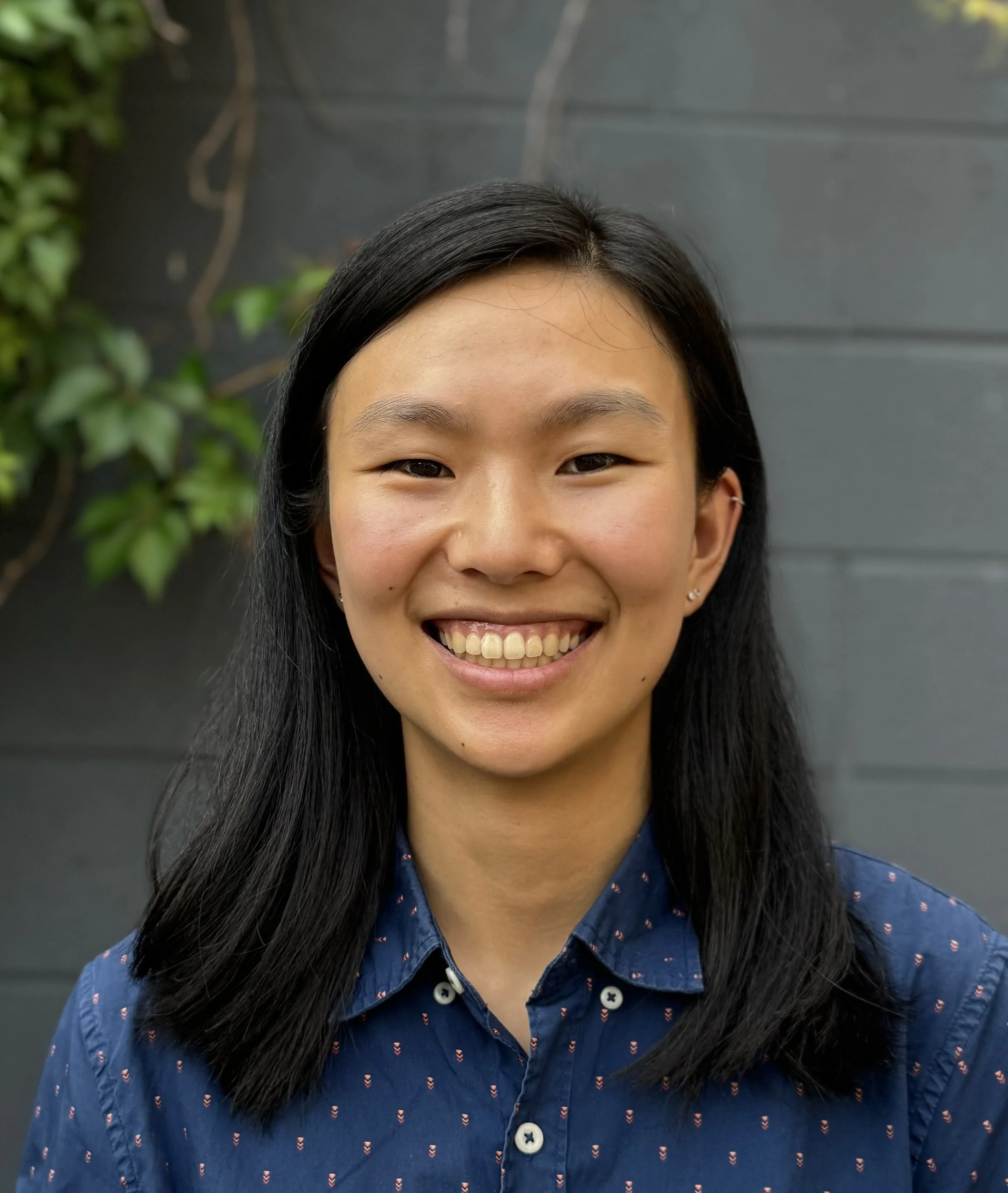

Angelina Wang

Cornell University

Dr. Angelina Wang is an Assistant Professor at Cornell Tech and the Department of Information Science at Cornell University, with additional affiliations in Computer Science and Data Science. Her research focuses on responsible AI, particularly AI fairness, evaluation of generative AI, and the societal impacts of AI. Dr. Wang's work has been recognized in leading conferences and covered by major media outlets. She has received several prestigious awards and fellowships, and previously held a postdoctoral position at Stanford University HAI.

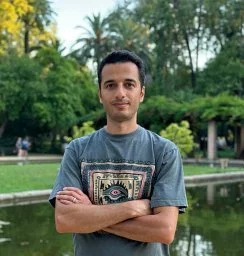

Behzad Mehrbakhsh

Technical University of Valencia (UPV)

Behzad Mehrbakhsh is an AI researcher affiliated with ValgrAI and VRAIN, and is a PhD candidate at the Polytechnic University of Valencia. His research focuses on artificial intelligence evaluation, data contamination, and adversarial benchmarking. Mehrbakhsh has contributed to studies on predictive AI, confounders in data analysis, and the robustness of content-based image retrieval systems. Their work is published in leading conferences and preprint archives, reflecting expertise in both theoretical and applied aspects of AI.

Callum McDougall

Alignment Research Engineer Accelerator (ARENA)

Callum McDougal is a research scientist on Google DeepMind’s interpretability team and the founder of the Alignment Research Engineer Accelerator (ARENA). He led the first three iterations of the ARENA program and has contributed to open-source projects in mechanistic interpretability. Currently, he serves in an advisory capacity for ARENA and focuses on AI safety research.

Cèsar Ferri

Technical University of Valencia (UPV)

Dr. Cèsar Ferri Ramírez is a professor in the Department of Computer Systems and Computation at the Universitat Politènica de València and serves as Assistant Dean for University-Enterprise Relations at the School of Computer Science ETSINF. He is a member of the Valencian Graduate School and Research Network of Artificial Intelligence (ValgAI) and has been part of the DMIP research team within VRAIN since 1999. His research focuses on machine learning and artificial intelligence, with numerous publications in leading journals and conferences. Dr. Ferri Ramírez holds an MSc and a PhD in Computer Science from the Universitat Politècnica de València, with his doctoral work centered on declarative languages for machine learning.

Cozmin Ududec

UK AI Security Institute

Dr. Cozmin Ududec leads the Science of Evaluations team at the AI Security Institute. He was previously Chief Scientist at Invenia Labs, an applied ML startup focused on optimising electricity grids. He holds a Ph.D. in Physics from the University of Waterloo and the Perimeter Institute, with research interests spanning machine learning, AI interpretability and safety, quantum theory, and the economics of energy systems. Dr. Ududec has extensive expertise in applied research, including high-dimensional time series modeling, probabilistic machine learning, and electricity market operations. He is also skilled in organizational design, project management, and technical leadership within fast-growing, research-driven environments.

Fazl Barez

University of Oxford

As a Senior Research Fellow at the University of Oxford, Dr. Fazl Barez leads research on Technical AI Safety and Governance, focusing on bridging technical innovation with policy and real-world impact. His work includes developing algorithms adopted by major AI labs, advising on policy at governmental levels, and commercializing interpretability research. Dr. Barez has led projects with the UK AI Security Institute, contributed to the Alan Turing Institute's policy responses, and collaborated with Anthropic's Alignment team on AI safety topics. His research is supported by leading organizations such as OpenAI, Anthropic, and NVIDIA, and he holds affiliations with institutions including Cambridge's CSER and ELLIS.

Jaime Sevilla

Epoch

Director and Co-Founder of Epoch AI, Jaime Sevilla specializes in researching the future of artificial intelligence, machine learning trends, and global risk forecasting. With a background in mathematics and computer science, his work focuses on understanding the drivers of AI innovation and projecting its long-term development. Sevilla also serves as an advisor to the Observatorio de Riesgos Catastróficos Globales and as a research affiliate at the Center for the Study of Existential Risk at Cambridge University, contributing to the management of global risks associated with emerging technologies.

Jérémy Scheurer

FAR.AI

Jérémy Scheurer is a research scientist specializing in deep learning and AI alignment, with nine years of experience in both academic and applied machine learning settings. He is currently a visiting researcher at New York University and has held positions at Apollo Research, OpenAI, ETH Zürich, and the Max Planck Institute for Software Systems. His work focuses on ensuring AI systems reliably align with human intentions, and he is recognized for his contributions to practical alignment research and responsible AI deployment. Jérémy holds both a Bachelor's and Master's degree in Computer Science from ETH Zürich.

Joaquin Vanschoren

TU Eindhoven / MLCommons

An associate professor at TU Eindhoven, Joaquin Vanschoren leads the Advanced Models through Open Research and Engineering (AMORE) group, focusing on advancing and democratizing AI systems. He founded OpenML, an open science platform for machine learning, and serve as editor-in-chief of the DMLR journal. His leadership roles include inaugural chair of the NeurIPS Datasets and Benchmarks track, co-chair of the MLCommons AI Risk & Reliability working group, and co-founder of the Croissant standard for AI resource sharing. A founding member of ELLIS and CAIRNE, Joaquin is recognized for his contributions to AutoML, data-centric AI, and has received several prestigious awards.

Joel Z. Leibo

Google Deep Mind

Dr. Joel Z. Leibo is a Senior Staff Research Scientist at Google DeepMind and a visiting professor at King's College London. He specializes in multi-agent reinforcement learning and the development of human-compatible artificial intelligence. With a PhD from MIT in computational neuroscience and machine learning, his research focuses on leveraging insights from human biological and cultural evolution to inform AI development. He is particularly interested in applying theories of cooperation from cultural evolution and institutional economics to create ethical and effective AI systems.

Jonathan Prunty

University of Cambridge

Jonathan Prunty is a Research Associate with the Kinds of Intelligence programme at the Leverhulme Centre for the Future of Intelligence, where he develops cognitive assessments to evaluate and align AI and human capabilities in workplace tasks. His interdisciplinary background spans developmental psychology, neuroscience, and cognitive science. Prior to his current role, he held postdoctoral positions at the University of Kent in health and social care economics and cognitive psychology. His recent publications address topics such as AI evaluation, intuitive reasoning in humans and language models, and the impact of digital tools and strengths-based care in social care settings.

José Such

King's College London

Prof. José Such is a Professor of Computer Science at King's College London and a researcher at VRAIN (Valencian Research Institute for Artificial Intelligence) at the Universitat Politècnica de València. He is Director of the King's Cybersecurity Centre, an EPSRC-NCSC Academic Centre of Excellence in Cyber Security Research. His work sits at the intersection of security, privacy, and AI — with a particular focus on evaluating how AI systems can be exploited maliciously, how users can be helped to manage their privacy online, and how to make AI-based systems more privacy-aware, secure, and transparent. He leads the HASP Lab (Human-Centred AI Security, Ethics, and Privacy) at VRAIN-UPV.

Katie Collins

Massachusetts Institute of Technology

Dr. Katie Collins is a Postdoctoral Fellow in the Computational Cognitive Science Group at MIT and a Visiting Postdoc at the Princeton AI Lab, with additional affiliation at the Centre for Human-Inspired AI at the University of Cambridge. Her research focuses on how humans and machines reason about novel systems of rules and reward, with an emphasis on the interplay between human cognition and artificial intelligence. She employs computational and mathematical modeling, often through games, to explore strategic reasoning, decision-making, and the design of AI systems that complement human agency. Dr. Collins holds a PhD in Engineering from the University of Cambridge and has prior experience at Google DeepMind and the Leverhulme Centre for Future Intelligence, as well as a strong record of interdisciplinary collaboration and workshop organization in AI and behavioral sciences.

Laura Weidinger

Google Deep Mind

Laura Weidinger is a leading researcher in AI ethics, specializing in the safety, transparency, and alignment of advanced AI systems with human values. Her work focuses on sociotechnical approaches to AI safety evaluation, early risk assessment, and the development of accountable AI innovation ecosystems. She has contributed to flagship projects at DeepMind and developed widely used frameworks for identifying ethical and social harms from generative AI. A frequent keynote speaker at major academic and policy institutions, Weidinger is recognized for her leadership in advancing responsible AI research and evaluation methods.

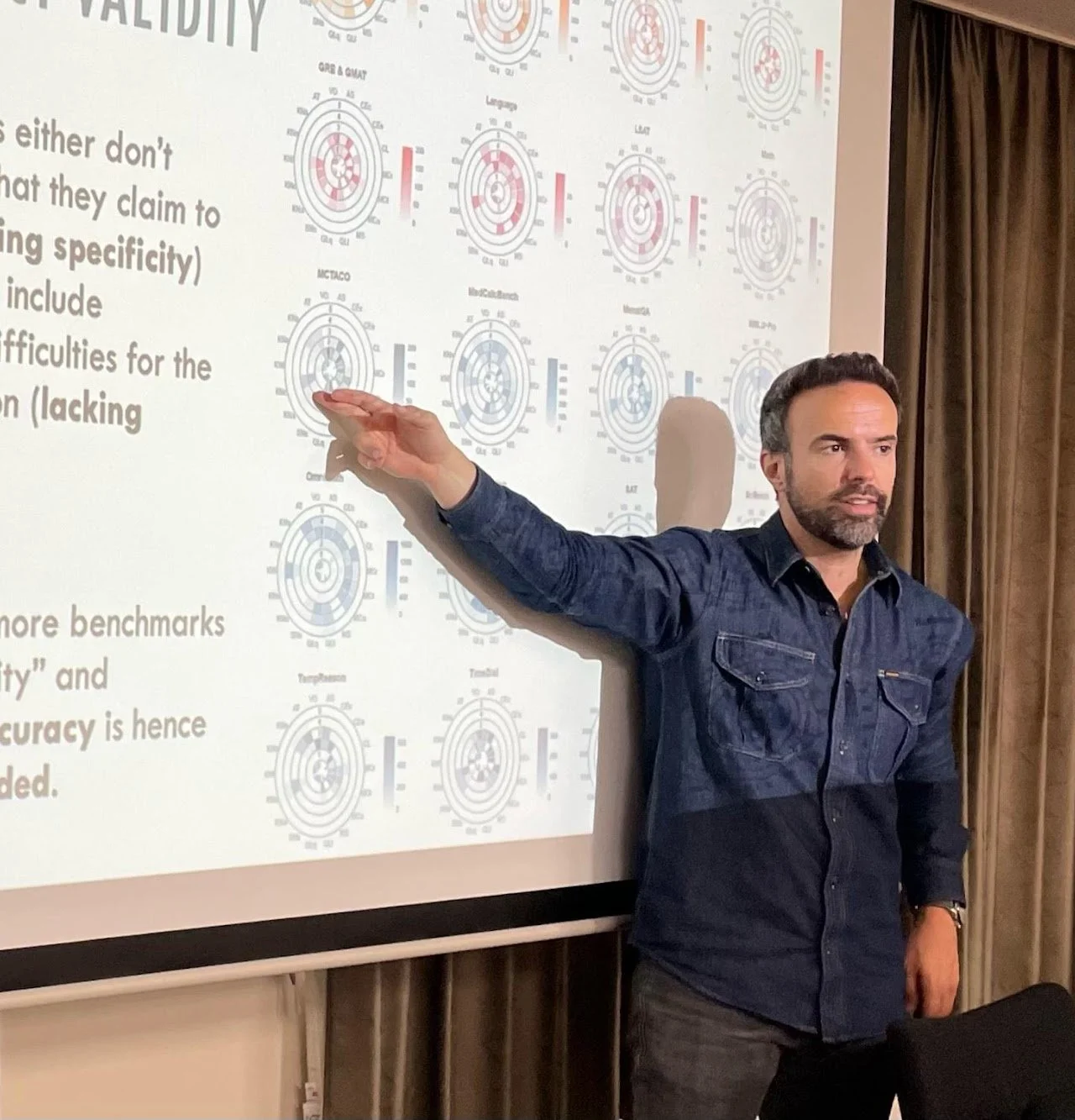

Lexin Zhou

Princeton University

Currently a PhD candidate in Computer Science at Princeton University, Lexin Zhou focuses on evaluating AI capabilities, generalization, societal impact, and safety, particularly in general-purpose AI systems such as large language models and agents. His work emphasizes the development of robust, valid, and systematic evaluation frameworks to better explain and predict AI performance beyond narrow benchmarks. He has held research and consultancy roles at leading organizations including Microsoft Research, OpenAI, Meta AI, and the European Commission JRC. Lexin's research has been featured in prominent outlets such as Nature, Financial Times, and MIT Tech Review.

Liming Jiang

Beijing Normal University

Based in Beijing, Dr. Liming Jiang is a Research Scientist at Microsoft Research Asia. Her work is situated within a leading global research organization, contributing to advancements in technology and computer science. Her role involves conducting innovative research in collaboration with academic and industry partners.

Line Clemmensen

DTU (Technical University of Denmark)

Dr. Line H. Clemmensen is a Full Professor in the Department of Applied Mathematics and Computer Science at the Technical University of Denmark. Her research focuses on statistical modeling, machine learning, and AI evaluation in low-resource domains, with particular emphasis on fairness and interpretability. She specializes in high-dimensional data analysis, including regularized statistics and advanced machine learning techniques. Clemmensen holds both an M.S. and Ph.D. from the Technical University of Denmark.

Lorenzo Pacchiardi

University of Cambridge

An Assistant Research Professor at the Leverhulme Centre for the Future of Intelligence, University of Cambridge, Dr. Lorenzo Pacchiardi leads a project on benchmarking large language models' data science capabilities, funded by Open Philanthropy. His research focuses on AI evaluation, predictability, and cognitive assessment, with collaborations involving prominent scholars and contributions to the AI evaluation newsletter. He has significant experience in EU AI policy, co-founding the Italian AI policy think tank CePTE and engaging in initiatives related to AI standards and research rigor. Dr. Pacchiardi's academic background includes a PhD in Statistics and Machine Learning from Oxford, with expertise in Bayesian inference, generative models, and probabilistic forecasting.

Lucy Cheke

University of Cambridge

Dr. Lucy Cheke is an Associate Fellow at the Leverhulme Centre for the Future of Intelligence, contributing to the Kinds of Intelligence Programme. Her research explores how the brain forms and manipulates representations of events beyond immediate perception, including episodic memory, episodic foresight, and causal reasoning. She currently investigates the bidirectional relationship between memory and obesity, focusing on how obesity-related brain changes may impact memory and, in turn, influence consumption regulation.

Manuel Cebrián

Spanish National Research Council (CSIC)

Dr. Manuel Cebrian is a Senior Scientist at the Spanish National Research Council, specializing in Computational Social Science and Artificial Intelligence. He has held research and academic positions at leading institutions including the Max Planck Society, MIT, and UC San Diego. Dr. Cebrian has contributed to significant projects such as the DARPA Network Challenge, pioneering digital contact tracing, and developing frameworks for Machine Behaviour. His work is widely published in top scientific journals and has received international media coverage.

Marko Tešić

DSIT - UK Government

Dr. Marko Tešić is a Senior AI Analyst at the Department for Science, Innovation and Technology, focusing on the impacts of AI on labor markets, productivity, and workforce change, as well as supporting policy on AI skills and adoption. Previously, he was a Research Associate at the Leverhulme Centre for the Future of Intelligence, University of Cambridge, where he evaluated AI benchmarks and large language models, and mapped AI capabilities to job tasks. His research background includes a postdoctoral fellowship with the Royal Academy of Engineering, investigating how explanations of AI predictions influence beliefs, with expertise in data analysis and Bayesian modeling. Dr. Tešić holds a Ph.D. in Psychology, an M.A. in Logic and Philosophy of Science, and a B.A. in Philosophy.

Mohammed Taufeeque

FAR.AI

Mohammad Taufeeque is a research engineer at FAR.AI with a background in Computer Science and Engineering from IIT Bombay. His expertise includes neural text classification and adapting machine learning models to out-of-distribution data. He has research experience from at Microsoft Research, focusing on advanced applications of neural networks.

Patricia Paskov

RAND

Patricia Paskov is a researcher at RAND and a Research Affiliate at the Oxford Martin AI Governance Initiative, specializing in AI evaluation, policy, and governance. Her work has been published in leading machine learning conferences and policy venues, and she serves as the Resilience section lead for the 2026 International AI Safety Report. Patricia has experience building machine learning models in industry and managing large-scale randomized controlled trials on topics such as financial inclusion, mental health, and education. She also co-founded NYAIGS and is active in developing the AI governance and safety community in New York City.

Peter Flach

University of Bristol

Dr. Peter Flach is Professor of Artificial Intelligence at the University of Bristol and an internationally recognized expert in machine learning, data science, and human-centred artificial intelligence. His research focuses on the evaluation and improvement of machine learning models, particularly through ROC analysis and the integration of logic and probability. He has served as Editor-in-Chief of the Machine Learning journal and held leadership roles in major international conferences and associations in data science. Prof Flach currently directs UKRI Centres for Doctoral Training in Interactive and Practice-Oriented Artificial Intelligence, with research interests spanning data-driven computational methods and responsible AI.

Peter Romero

Technical University of Valencia (UPV)

Peter Romero leads research projects and educational programs in people analytics and is currently a researcher at Keio University in Tokyo. With a diverse educational background from Hamburg, Tokyo, and Cambridge, he has extensive teaching experience at various academic levels and in executive education. He brings 20 years of experience in the talent business, including roles in R&D and consultancy, and has managed global People Analytics projects with leading organizations. As a founding member of Advanced Cognition Labs, his research focuses on natural language processing, cybernetics, and people analytics, particularly in areas such as deep learning, Bayesian learning, and multi-agent assessment methodologies.

Sanmi Koyejo

Stanford University

Dr. Sanmi Koyejo is an Assistant Professor of Computer Science at Stanford University and an Adjunct Associate Professor at the University of Illinois at Urbana-Champaign. He leads the Stanford Trustworthy AI Research (STAIR) group, which focuses on developing measurement-theoretic foundations for trustworthy AI systems, including AI evaluation science, algorithmic accountability, and privacy-preserving machine learning. Dr. Koyejo's applied research extends to healthcare, neuroimaging, and scientific discovery. He's affiliated with several Stanford institutes and groups, including SAIL, HAI, CRFM, AIMI, AI Safety, Machine Learning Group, and Bio-X.

Sean O hEigeartaigh

University of Cambridge

Dr. Seán Ó hÉigeartaigh is Associate Director (Research Strategy) and Programme Director for the AI: Futures and Responsibility Programme at the Leverhulme Centre for the Future of Intelligence. His research focuses on the governance, foresight, and global risks associated with advanced artificial intelligence and other emerging technologies. He has held several distinguished leadership roles, including founding Executive Director of the Centre for the Study of Existential Risk at the University of Cambridge, and has advised organizations such as the OECD, United Nations, and UK Government on AI and catastrophic risk. Seán’s expertise spans technology policy, catastrophic risk, genomics, and bioengineering, and he has played a central role in international collaborations on AI governance and risk.

Steph Guerra

RAND

Steph Guerra is a molecular biologist and biosecurity expert specializing in the intersection of artificial intelligence and biotechnology. She leads the AIxBio portfolio at the RAND Center on AI, Security, and Technology, focusing on research that advances security, innovation, and public good through technology and policy. Previously, she served as Head of Strategic Partnerships at the U.S. Artificial Intelligence Safety Institute at NIST, evaluating national security risks of advanced AI systems. Her experience includes roles at the White House Office of Science and Technology Policy, the Department of Veterans Affairs, and the National Academies of Sciences, with a focus on science policy, biodefense, and public health.

Stuart Elliot

Organisation for Economic Co-operation and Development (OECD)

Dr. Stuart W. Elliott is a Senior Analyst at the OECD, where he leads the "AI & the Future of Skills" project. He holds a doctorate in economics from MIT and has postdoctoral training in cognitive psychology from Carnegie Mellon University. His research focuses on the intersection of artificial intelligence, skill demand, and education, and he authored the influential 2017 CERI report on Computers and the Future of Skill Demand. Elliott is also a scholar at the National Academies of Sciences, Engineering, and Medicine, where he has led studies on educational assessment, science and 21st-century skills, and workforce productivity.

Thomas Dietterich

Oregon State Unversity

Dr. Thomas G. Dietterich is a Distinguished Professor Emeritus and a pioneering figure in machine learning, recognized for foundational contributions to ensemble methods, hierarchical reinforcement learning, and AI robustness. He has advanced the field through influential research on error-correcting output coding, the multiple-instance problem, and the integration of regression trees into probabilistic graphical models. Dietterich has held prominent leadership roles, including president of the Association for the Advancement of Artificial Intelligence and founding president of the International Machine Learning Society. He has received major awards for his service and continues to contribute as a journal editor, program chair, and advisor to research organizations.

Tom Cunningham

METR

Currently at METR, Tom Cunningham specializes in the economic impact and capabilities of artificial intelligence, with prior experience at OpenAI focusing on economic research and data science. His expertise spans AI economics, large language model analytics, content moderation, experimentation, network effects, business models, and advertiser behavior, informed by senior roles at OpenAI, Twitter, and Meta. He has contributed to influential research and policy discussions, including Congressional investigations and academic publications on experimentation and cognition. Tom's academic background includes positions at Caltech, the Institute for International Economic Studies, Tel Aviv University, and Harvard University.

Tyler Tracy

Redwood Research

Tyler G. Tracy is a Member of Technical Staff at Redwood Research, focusing on high-stakes AI control and safety protocols. His research includes red-teaming AI systems and agent foundations, with published work on computational complexity in tile assembly models. He has extensive software engineering experience, including roles at SupplyPike, Insurgrid, Tesseract, and Google Cloud, and is the co-founder and CTO of Sider. Tyler also founded the NWA AI Meetup and has held leadership positions in academic and professional organizations related to computer science and AI.

Wout Schellaert

EU AI Office

Dr. Wout Schellaert is a Technology Specialist at the AI Office of the European Commission in Brussels, focusing on the evaluation of general-purpose artificial intelligence and related policy. He holds a PhD from the Universitat Politècnica de València, where his research centered on AI evaluation. Wout has also been a student fellow at the Leverhulme Centre for the Future of Intelligence in Cambridge. His expertise lies in the assessment and policy implications of advanced AI systems.

Xiaoyuan Yi

Microsoft Research Asia

Dr. Xiaoyuan Yi is a researcher at the Social Computing Group in Microsoft Research Asia, specializing in Natural Language Generation (NLG). His work focuses on responsible and creative NLG, including debiasing, interpretability, and the automatic generation of literary texts such as poetry and stories, as well as intelligent writing tools. He led the development of the Jiuge classical Chinese poetry generation system at Tsinghua University and has published in leading conferences such as AAAI, IJCAI, ACL, EMNLP, and CoNLL. Additionally, he serves as a program committee member for major conferences in the field.